Last month researchers reported that the data memory-dependent prefetcher (DMP) on Apple M1 chips can be used to break encryption. (And there are indications that similar attacks might be possible on Intel silicon.)

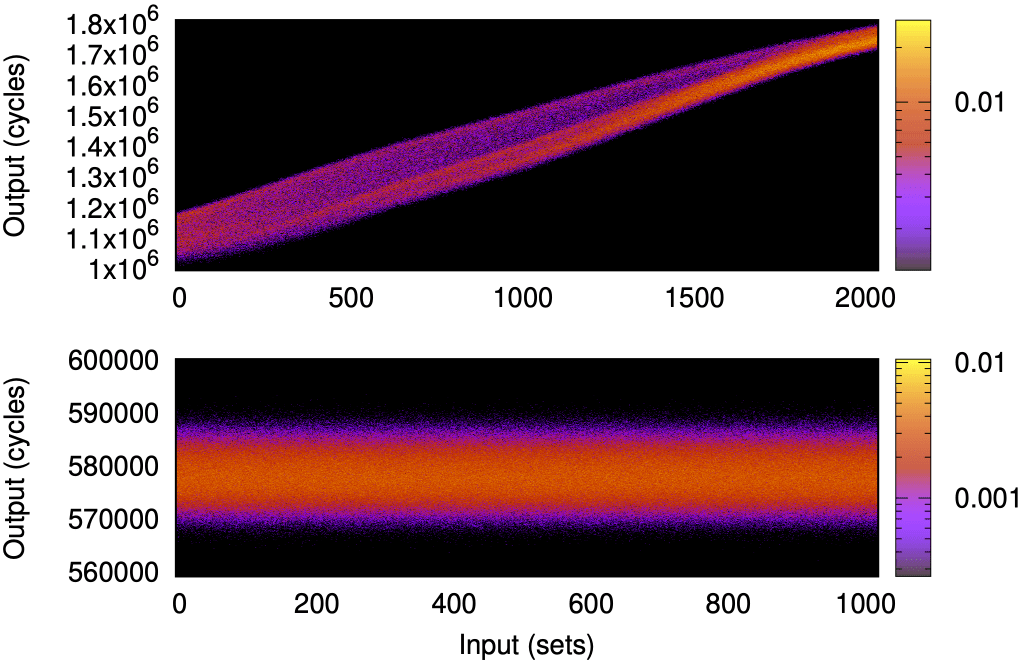

The researchers observe that any (64-bit) aligned data word, whose value matches bits [53:32] of its own address, is considered a pointer by the DMP. The DMP then speculatively dereferences this putative pointer and loads the target word into the (shared) L2 cache. The attack, dubbed GoFetch, is pretty ingenious: By feeding specific data (as cleartext) to an encryption process, and using prime&probe for observing the L2 state (as indicated in the figure), they can determine bits of the secret key, based on whether the DMP prefetches.

There are a number of interesting (in the Chinese sense?) observations to make here.

The mechanism was known to pose security problems

… but that problem didn’t seem to be taken seriously, presumably because no realistic attack was demonstrated. Specifically, the Augury work, published two years earlier, showed that DMPs could lead to disclosing memory contents, but the responsible people (in this case, Apple) didn’t seem to care.

Unfortunately that’s a consistent pattern of denial that ranges across the community and industry: Some academics point out that there’s a problem, but yeah, my system hasn’t actually been hacked, so I’ll just ignore it.

I’ve been through this myself. I’ve long been worried about exploiting microarchitecture for covert channels, and demonstrated how they could be systematically prevented, the approach we ended up calling time protection. Our argument was that if there’s a covert channel (allowing a Trojan to intentionally leak data) then that’s a problem. Because most systems execute plenty of untrusted code that must be assumed to contain such a Trojan, or could be highjacked into serving as a Trojan. (And, besides, any covert-channel mechanism has a high risk that someone will find a side channel, i.e. unintentional leakage, based on the same underlying mechanism.)

This only changed with the Spectre attacks: Spectre uses speculative execution to construct a Trojan from gadgets in innocent code, which then uses the covert channel to extract information. (And most people falsely talk about Spectre using a side channel, which doesn’t help raising awareness of this problem.)

Obviously, taking security seriously means dealing with problems proactively, not only when the horse has bolted. But that’s not how industry and our discipline seems to operate.

Time protection defeats the GoFetch attack (and many others)

The core idea of time protection is to spatially or temporally partition any contented microarchitectural resource between security domains. In the case of the L2 cache (used in the GoFetch exploit) this means partitioning between concurrent processes (eg. by cache colouring). This means that the attacker can only influence the state of its own cache partition (same holds for the victim, i.e. the encryption process in the case of GoFetch) and the prime&probe attack fails.

There will, no doubt, appear variants of GoFetch exploiting different parts of the microarchitecture (as there are by now several variants of Spectre), but time protection will stop them all, as it is a principled approach that completely prevents any microarchitectural timing channels between security domains.

Note that we also found that complete time protection is not possible on contemporary processors, as they lack a mechanism for resetting all microarchitectural state that cannot be spatially partitoned (that includes all on-core state). However, our collaborators at ETH Zurich demonstrated (constructively, by implementing it as a new instruction, fence.t, in an open-source RISC-V processor core) that adding such a mechanism is easy and cheap. So, why not just do it?

… But people keep inventing ad-hoc point solutions

While time protection is a principled approach to completely prevent microarchitectural leakage, and thus defeats the whole class of attacks that depend on them (which includes Spectre, GoFetch and many others, but also any Trojan in untrustworthy code), people keep inventing ad-hoc band-aids for specific attacks, which end up making hardware more complex (and thus error-prone) and only end up shifting the attackers’ efforts to the next battlefield. A great example of this is speculation-taint tracking, which massively complicates hardware, but won’t prevent new attacks (eg is unlikely to stop GoFetch).

It’s disheartening that the authors of the GoFetch paper fall in the same trap. They write:

Longer term, we view the right solution to be to broaden the hardware-software contract to account for the DMP. At a minimum, hardware should expose to software a way to selectively disable the DMP when running security-critical applications.

In other words, expose DMP at the HW-SW interface, making it (and software based on it) massively more complex, just for dealing with one specific attack vector.

I’m all in favour of improving the HW-SW contract. In fact, we have argued for years that this is needed!

But rather than exposing more implementation details that limit the flexibility of hardware designers, the extension should be minimal and high-level, as we have proposed: Identify which resources can be spatially partitioned (and how), and providing a mechanism for resetting all microarchitectural resources that cannot be spatially partitioned. The latter is exactly that fence.t is – a simple, principled mechanism that can be used to knock out a whole class of attacks.

And some shocking revelations by the OpenSSL maintainers

Much of the above is what I’ve come to expect. Annoying, but unsurprising.

What actually shocked me was this quote from the Disclosure section of the GoFetch paper:

OpenSSL reported that local side-channel attacks (i.e., ones where an attacker process runs on the same machine) fall outside of their threat model.

Read this again…

Taken at face value, this means that OpenSSL is not fit for purpose if you have any untrusted code on your machine, i.e. any code that you cannot be sure not to be malicious. This includes any third-party code that could contain a Trojan, or any code that has a bug that allows it to be highjacked.

Think about it. This means that OpenSSL is not fit for purpose on:

- a cloud hosting service that co-locates VMs/services from different users on the same physical machine (i.e. almost all of them)

- your phone that almost certainly runs apps that come from developers you cannot trust to produce bug-free code

- your laptop that almost certainly runs programs that come from developers you cannot trust to produce bug-free code

- most likely your car

The mind boggles.

Houston, we have a problem

Does anyone care?

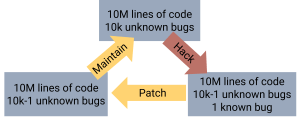

Machine learning is en vogue, being applied to many classes of problems. One of them is cybersecurity, where ML is used to find vulnerabilities in code, simulate attacks, and detect when an intruder has breached a system’s defenses. Ignoring that intrusion detection is an admission of defeat (it comes into play when your system is already compromised!) this sounds like a good development: helping defenders to find weaknesses faster, hopefully before the attacker does.

This rather optimistic view of the role of ML in cybersecurity ignores the fact that the attacker will use the same techniques to find weaknesses faster. Furthermore, let’s assume, optimistically, that ML speeds up the defense (proactively detecting weaknesses, detecting intrusions) as much as the attack (detecting and exploiting weaknesses). This is a pretty big assumption, as the attacker can choose where to attack, while the defender must defend everywhere. But even if this assumption holds, detecting vulnerabilities is only part of the defence: the defender must also remove those vulnerabilities, and that part is not accelerated by ML, as it still requires humans to analyse, modify, test, and deploy programs.

In other words, the “patch” part of the traditional, reactive patch-and-pray cycle of software debugging isn’t accelerated, only the “pray” part. So, rather than strengthening the defender, the net effect of ML is increasing the attacker’s advantage in the cybersecurity arms race.

This is not an argument for stopping the defensive use of ML in cybersecurity. It is an argument that ML is not the technology to win the cybersecurity war — it will, at best, delay the inevitable defeat. That’s still better than doing nothing, but it’s a fatal mistake to think it’s all you need to do.

In this respect, it is bewildering to see the widespread ML-mania everywhere in cybersecurity; for example, the Australian Government’s National Security Challenges for the National Intelligence and Security research grants program mentions ML a lot, but is surprisingly quiet about anything that will prevent attacks in the first place. Other countries don’t seem much better.

ML hasn’t changed the fact that systems will be compromised if they aren’t secure by design, and their critical components operate to specification. Cybersecurity work needs to focus on these fundamental approaches. Anything else buys us at best some breathing space and is at worst a detraction creating a fatal illusion of security.

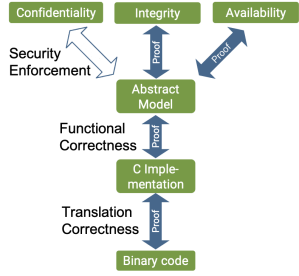

We need real cybersecurity more than ever, especially since the advances in ML shift the battlefield further in favour of the attacker. We need to re-focus on the fundamentals: Security-oriented design that enables proof of security enforcement, and implementations that can be proved to match the design. The seL4 microkernel and work based on it show that it is possible, but as a community, we need to continue to work on scaling these guarantees up to full, real-world systems. ML won’t do it for us.

This post originally appeared on 2022-11-08 on the ACM SIGBED blog.

In the final blog in this series I want to talk about how TS not only survived, but bounced back with a vengeance.

This is much more fun to write than the previous blog, which was truly painful. I had to spend hours trawling through emails that made me re-live all the insanity, dishonesty, deceit and other unethical behaviour we have experienced in CSIRO. Reading this still makes my blood boil.

Why did all this happen? Clearly, TS with its uncompromising culture of excellence and outspokenness was a misfit to the Data61 culture of mediocrity and incrementalism, and I knew that for a long time. It’s not hard to imagine that many people felt threatened by the combination of ambition and delivering the “impossible” that characterises TS. If all you can do is marginal improvements over other people’s work, or applying other people’s research to a narrow class of problems, then looking at TS must feel intimidating.

But I happen to think that the taxpayer deserves the best. And the taxpayer isn’t getting good value from Data61. Eventually the powers that be will realise this (after far too many tax dollars will have been wasted) and pull the plug. The earlier the better.

10 months later, where are we?

In short: TS is in great shape, the best it’s been in for years.

For years I had brought millions of external research funding into NICTA and then Data61/CSIRO every year. I was highly confident that within 6–12 months I would line up enough project funding to keep the group going, the main challenge was to keep the group alive until then. Turns out I was a pessimist: Lining up funding was even faster. In fact, I had never raised as much research money in any year as I have in the past 10 months.

But all the world’s project funding doesn’t help if there are no people to put onto those projects, and that was the prospect we were facing. Most people in TS had job offers within days, and many took them – who could blame them when the group seems to be dying?

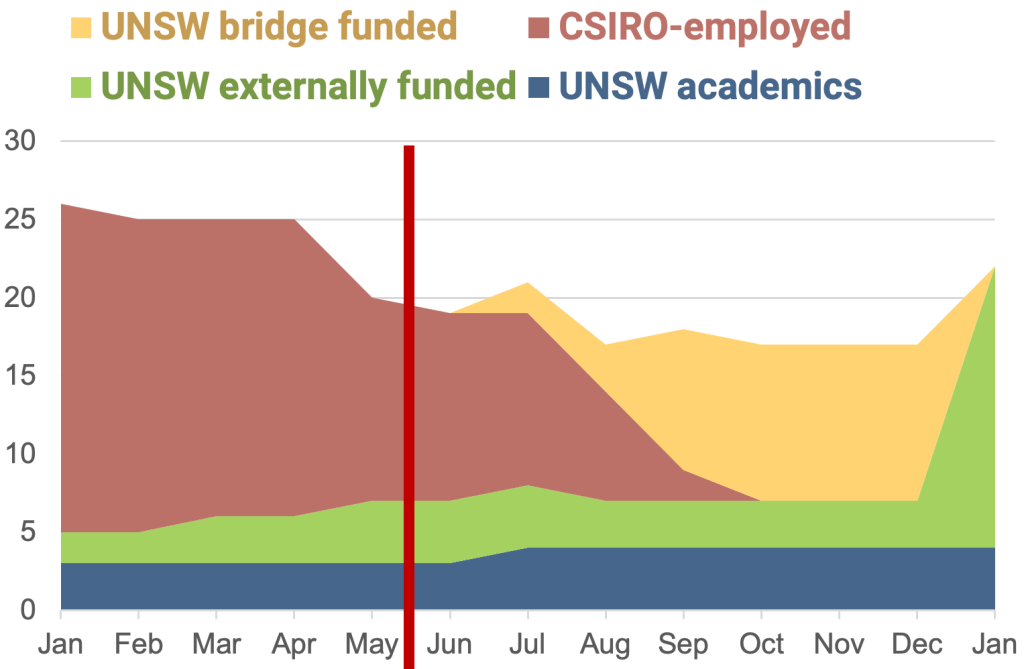

This is when Aaron, my UNSW Head of School, came to the rescue with his offer to fund the group to the end of the year – exactly the break we needed! The figure shows what happened: Between people being kicked out of CSIRO (the dark red downward slope) and the new funding cutting in (the steep green mountain) there’s a valley of death – bridged by the UNSW funding. Without that, TS would be dead.

What’s not visible from the graph is the turnover in people. Despite having the (initially short-term) funding, we lost many people, especially those highly skilled in operating systems (OS). This is when the second amazing thing happened: The strongest influx of students (current ones as well as fresh graduates) we have had in many years. And it’s not only the quantity, but also the quality, they’re awesome! Nothing like it happened in the past 10–15 years – just when we really needed it!

One benefit of the transition whose value is hard to overestimate is the high degree of autonomy we have at the university. When I brought money into CSIRO, I lost control. When I bring money into UNSW, I retain control, meaning I always know exactly how much money I have, and I’m the one controling spending – this is so important to operating well and gives us the stability and flexibility we need. And UNSW is in general a so much saner environment than CSIRO – hard to appreciate fully if you haven’t experienced them both.

What does this mean for the seL4 Community?

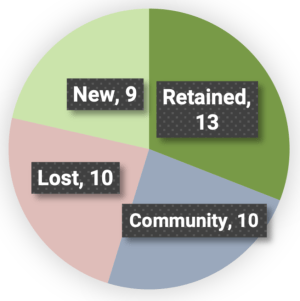

Had TS gone under, it would have spelt real trouble for seL4. The community has grown and matured a lot, but some leadership is still needed (now jointly provided by TS and Proofcraft). And while TS has been temporarily weakened, this is being quickly reversed, as evident from the recent pull requests coming from some of the new TSers.

Beyond this temporary rebuilding phase, there is a strong overall benefit to the community, in that core seL4 skills are now spread much wider, to a larger number of organisations, where people continue to be paid to contribute to seL4. This is critical to scaling up – to be successful, the community must reduce its dependency on TS. The part labelled “community” in the graphic represents those ex-TS people now working for other ecosystem players. In the past, most people leaving TS were lost from the ecosystem, now many are moving inside the ecosystem, strengthening it in the process. Furthermore, I have hope to draw some of the “lost” souls back into the community with some of the exciting things happening in the background.

This migration of talent goes along with a refocus of TS: Arguably, for the past few years we were focussed too much on technology transfer and applied research (although we have kept performing ambitious research, such as the multi-award-winning time protection project). Some of that tech transfer work was needed to accelerate the adoption of seL4, but the short-term focus and pressure to earn revenue in Data61 didn’t help. The maturing of the commercial seL4 ecosystem allows us to get away from pure engineering work and focus on research, with a healthy mix of applied and fundamental work (but always driven by real-world problems).

The old model is shown in the first diagram: TS did everything. Obviously, this is not a sustainable model for a growing ecosystem. The new model is shown in the second diagram: TS still drives the research, but development and deployment is now mostly done in industry – as they should. TS is also broadening its research from the kernel to the full system, an example is the provably secure general-purpose OS project, which is defining the next generation secure OS design.

At the same time, we keep close contacts with industry, which helps us understand real-world problems, which, in turn, drives our research. The seL4 Foundation is a great enabler of all this. The obvious advantage of this model is that it scales better, and we hope that eventually other universities will join on the research side to improve scalability further. Another advantage is that development and deployment is now mostly industry-funded, so public-sector support can focus on the research. And indeed, most of the financial support to TS is from government organisations; not all of the sources are public knowledge yet, you’ll hear more of it over the next few months 😉.

If you want to hear a bit more about TS’s transformation, watch my LCA talk.

TS Rocks – Again!

It was a near-death experience for TS, but we survived and are now stronger than we have been in a long time.

We’re operating in the massively saner environment of UNSW, where we have autonomy and, importantly, retain control over the money we bring in. We have the money to work towards our vision (we can always do with more, there are projects I’d like to accelerate, but our present financial position is strong). We have lost a lot of experience, but we have an incredibly strong team of fast learners, so it won’t take too long to recover that aspect, and the culture of the team is more inclusive and supportive than it’s ever been.

And we have awesome support from management. I had all but forgotten how it feels to be considered an asset rather than a problem, and, instead of wasting most energy on pushing management out of the way, having a boss who sees it as his main job to help you succeed. Management that thinks it’s their job to enable you to work at your best? Feels like heaven. What a difference from an environment where excellence is a problem, and people are treated like crap!

In short, TS is back, and moving fast. You’ll keep hearing our success stories – stay tuned!

I’ll leave you with the future of TS: The students and graduates who have joined us in the past 6 months. Clearly, the future is bright!

It’s now almost 9 months since CSIRO’s Data61 announced it was abandoning the Trustworthy Systems (TS) group. In previous blogs I have fact-checked the CSIRO CEO’s Senate Estimates evidence as well as CSIRO’s public statements about this, and pointed out how these statements omitted important facts or were outright dishonest.

Before I leave this chapter behind for good, I feel a responsibility for shedding a bit more light onto the machinations inside the organisation that is funded by my (and your) tax dollars. I’ll show that the abandoning of TS didn’t come out of the blue, but was the culmination of years of undermining what was arguably the most successful group in Data61. I’ll skip over the outright lies I encountered (of which there were plenty), as I have better things to do than defend a defamation case where I’d have to rely on the memories of other people (even though it would be fun to table all that material in court and watch people being cross-examined). There’s plenty of other appalling stuff to talk about.

Specifically, I’ll demonstrate that the abandonment of TS wasn’t just the result of a change of strategy (which in itself would be highly inconsistent). To the contrary, I’ll show that the dismantling of TS was going on for years.

Everything factual I write below (except where clearly expressed as suspicion or speculation) is witnessed and documented. In fact, I think this information should all be FoI-able (and I herewith explicitly wave my privacy rights in respect to any documents relevant to this blog). Should you succeed in accessing any of them through FoI then feel free to shoot me a copy, I’ll happily put it up here.

Pre-History

How did we end up in CSIRO’s Data61? Not voluntarily.

TS (under the previous names ERTOS and SSRG) came to fame in NICTA, the research organisation set up by the Australian government around 2003. After some false starts it became a great organisation, committed to excellence, and encouraging risk-taking and a long-term view. It was an environment where TS prospered, and achieved things no-one else could (and few thought even possible), becoming famous around the world for our work on creating and verifying the seL4 microkernel, and related activities. Core to that success was our ambition, aiming high and working hard to achieve our goals. This was enabled by a critical mass of excellent people, and the strong integration of complementary skills, especially operating system (OS) design and implementation with formal methods (FM). The team was worldwide unique in this, and made the best of it.

The federal government in its wisdom de-funded NICTA, and it was merged with the ICT parts of CSIRO to form Data61 in July 2016.

Early CSIRO Years

Money was tight after the merger, and there were budget cuts right from the beginning. Despite management assurance that cuts would be vertical, the opposite was true, cuts were horizontal, across the board. Despite our track record, we were probably hit hardest.

Why? Because we were so successful.

We had a large amount of external revenue, resulting in many people hired on fixed-term positions (while the rest of Data61 staff were mostly continuing positions). This made it easiest to cut TS, by not extending positions.

Sounds more than a bit like penalising success, doesn’t it?

The “justification” given by management was the impending “funding cliff” for TS. We had at the time a number of projects nearing the end of their funding period. Among them was the DARPA HACMS program, by any definition a huge success, which demonstrated that “this formal methods stuff” was useful in the real world, leading to a massive re-think in US defence circles. HACMS came to an end in early 2017 (although there were some subsequent transition projects that provided further funding), other projects winding up at a similar time. While we notionally had no follow-up funding lined up, we knew it would come within a year or so (as it did), and had set aside significant reserves to bridge that period. As soon as we became part of CSIRO, management took away all of our reserves.

To add insult to injury, a middle manager told us “you should have planned better” – that manager was well aware of our reserves having been taken! Rarely in my whole life was I as angry as at that moment, and I really had to constrain myself from turning violent. Just mind-boggling.

It should be obvious to everyone that grant money and other external revenue for a research group are inevitably lumpy, and you need to smooth it out, by creating a financial buffer. The highly-qualified people we need for our work in TS don’t grow on trees, you can’t just fire them and then expect to re-hire a year later. Besides, such hire-and-fire attitudes are obviously poison to team culture (and TS always prided itself of a positive, supportive culture). Plus our people have highly sought-after skills, so you hold on to them as long as you can. In contrast, the attitude among CSIRO management seems to be that people are fungible, you can simply shift them from one job to another. (I have seen that attitude at work many times in different contexts, hard as it seems to believe.)

The result is shown in the chart on the right: The core seL4 team lost more than a quarter of its staff in the course of a year – a terminal decline unless reverted. Amazingly, in April’17 the DARPA I2O Director (one step down from DARPA’s boss) wrote a letter to Data61 management pleading not to defund us. Didn’t have much effect.

The consequences were predictable: The TS team, which always prided itself for under-promising and over-delivering, ran massively overtime on a key project – a direct consequence of the management-forced staff attrition, done despite plenty of warning. Did the responsible manager get sacked? Of course not, despite the detrimental effect this had on team morale as well as our reputation.

Working on Plan B

We clearly needed a way out of this situation, and I was working on a Plan B from late 2016. The big challenge was, of course, the lack of a financial buffer, which meant it wasn’t possible to just move the team across to UNSW or a commercial entity. For years I had brought in millions a year of external funding for the group, and brought it all to NICTA (and then Data61), nothing of significance went to UNSW. Consequently I had very limited reserves I could control at UNSW, and they were quickly used up to rescue a small number of most important people Data61 refused to keep on. So, not much could happen until at least one big externally-funded project would come in.

This happened throughout 2017. We knew from Q2’17 that we would be getting several large projects – demonstrating that the “funding cliff” was non-existent.

When, towards the end of the year, I had a big project lined up that I was going to take to UNSW, Data61 management’s attitudes changed dramatically: They now agreed to conditions that allowed us to operate as we needed to.

Right of First Refusal and Staffing Flexibility

In exchange, I had to agree that any external funding I generated that could be taken to CSIRO had to be offered to them (right of first refusal).

This means Plan B was off (for the time being).

But I did at around the same time attract funding that could not be taken to CSIRO, as some funding sources aren’t accessible to them (eg the Australian Research Council, ARC). This means that I had to hire staff at UNSW to work on those projects. Which creates a new challenge: For a tightly integrated group such as TS it doesn’t make sense to have all staff allocated to one particular project (or group of projects) permanently. The needs of many projects change over time, and we had always moved people between projects. In fact, we considered this part of skills development: Giving junior staff exposure to multiple projects and tasks.

To make this work in practice, an agreement was put in place that allowed us to move staff between UNSW and CSIRO projects, and true up salary expenses quarterly. This worked very well while it lasted.

The special agreement with UNSW even helped Data61 get around internal limitations of staffing numbers. We ended up hiring all new TS staff through UNSW, even when they were to work primarily on CSIRO projects – to help Data61 manage their head-count limit, all with the explicit blessing and support of Data61 management.

But Data61 management rewarded our (and UNSW’s) helpfulness by screwing us, details below.

Back to the Bad Old Days

Things worked well as long as we attracted plenty of money. I don’t have the numbers to prove it, but I’m fairly confident that TS was by far Data61’s biggest source of revenue that was not Australian tax dollars.

However, as soon as a bunch of projects neared completion, without a seamless transition to new funding, things changed again. Our agreements were simply abandoned, without even the promised consultation. I had adhered to our agreements to the dot, Data61 management didn’t.

But it got worse.

Things went back to where they were in 2016: TS was being dismantled, by not extending expiring fixed-term positions, and not replacing any voluntary departures. Except that it was actually worse than that.

Outsourcing Redundancies to “Partners”

There were government funding cuts at the time, and CSIRO announced proudly that they would manage them without sacking people. Which is technically correct, because they outsourced the sackings.

In Sep’20, Data61 management told UNSW that they were revoking funding for all the positions we had hired at UNSW for Data61 projects (and on Data61 money), and hat UNSW would have to let go of the staff or find other funding sources for them. Some of them had been hired on multi-year positions, with the explicit approval of Data61 management! There is no other way of putting it: CSIRO outsourced their redundancies to their “partner” UNSW – without any negotiation! UNSW was forced to make people redundant, with the reputational risk resulting from that, so CSIRO could claim that they were weathering the government funding cuts without redundancies. Way to treat your partners, who were doing you a favour!

I’m not a lawyer, but it seems to me that UNSW would have an excellent case of suing CSIRO for the money, given there were written commitments to fund those UNSW positions. UNSW probably decided the amount at stake wasn’t worth an expensive and high-profile court case, and in the end the loyal TS people were thrown under the bus. I can’t blame UNSW too much for that decision, which was really forced by Data61 management’s actions. But I’ve rarely seen the level of unethical behaviour as displayed by Data61 management in this issue, and it’s something for which I will never forgive those individuals.

Cancelling a US Air Force Grant

Our high-profile, multi-award-winning Time Protection project is funded through a grant from the ARC. As CSIRO is not eligible to apply for ARC funding, this had to be a UNSW project (in collaboration with the University of Melbourne), without violating the first-right-of-refusal agreement.

In 2019 the US Air Force’s Asian Office of Aerospace Research and Development (AOARD) also offered funding for the project. AOARD were aware of the ARC funding, the awarded amount of which was about 2/3 of what we had applied for, so it was great that AOARD offered to cover part of the shortfall. AOARD had been funding a number of our projects for many years, going back to the NICTA days, and were generally highly supportive of us.

It would have been easiest to send the AOARD money through UNSW, given that existing project activities were all at UNSW and Melbourne Uni. However, in line with the right-of-first-refusal agreement, we offered the money to CSIRO, which accepted it. This was a one-year grant with two annual extension options (at AOARD’s discretion).

In October 2020, AOARD exercised the first extension option and gave us a second year of funding, which was accepted by Data61 management immediately, was invoiced and the money received by CSIRO soon after.

Then a few weeks later, completely out of the blue, Data61 management decided to return the funding to AOARD. I was livid – I had never seen anything like this happen before! I told them that if they didn’t want the money, they should simply hand it to UNSW and let them be responsible for the project. (There was a precedent for that, where a TS-affiliated colleague from another university got money from Intel, which went to CSIRO under right-of-first-refusal, and CSIRO unceremoniously handed it to the respective university.) CSIRO refused to do this, and just offered to have the CSIRO bizdev person in charge put me in contact with AOARD. (As if I needed the help of some nobody to talk to the organisation that had been funding our work for years, because they knew and trusted us! Of course I had been talking to them immediately, but their hands were tied as long as CSIRO didn’t cooperate, which they did not.)

In the end I was powerless to stop the destructive move, and the money was lost irrecoverably. This could not have happened had I taken it through UNSW in the first place, but I honoured the right-of-first-refusal deal. CSIRO’s way of saying “thanks” was to screw me and the other people on the project.

On 1 December 2020 I made a formal complaint against Data61 management, alleging

- lack of due process

- lack of transparency, in clear violation of CSIRO Values

- lack of honesty in the justification

- discriminatory treatment (given the Intel precedent)

- reckless and unjustified action that undermines my reputation.

Nothing happened, despite multiple reminders. Finally after two months, and another reminder, I got a response within less than a day. The complaint was dismissed on 4 March 2021, without any attempt to address any of the specific issues I had raised, and simply claiming (falsely) that my failure to take up the offer to put me in touch with AOARD had undermined my case. (I replied that I had been talking to them, but got no response.)

Unfortunately, this episode is completely in line with my experience of how CSIRO management operates. No more than lip service is paid to processes, consultation and “CSIRO values”. Of course, after all I’ve seen I expected nothing else, but went through the process to have things documented. I repeat that I explicitly wave my right to privacy in this matter, in case someone wants to FoI the paper trail.

Bad Old Days – in More Ways that One

2020–21 really was déja-vue. They ran down our staffing levels, as shown in the chart below. And they didn’t care about consequences, including how critical a particular person was to the externally-funded projects. I kept warning about not being able to deliver; management didn’t care. The result was that, for the second time, and again squarely due to the actions of Data61 management, we were horribly late on an externally-funded project. In fact, the late project was part of the very contract I wanted to take to UNSW in 2017–18 but Data61 was keen to get. The project was abandoned by the funders when Data61 kicked TS out.

The chart on the right shows our declining staffing levels during the period. The big dip from Nov’20 to Jan’21 were the outsourced redundancies; the dip in April’21 is when our verification stars left to set up Proofcraft, and my long-standing brother-in-arms Peter Chubb left in disgust. (Fortunately I had enough money left to hire Peter at UNSW, where he continues to be the backbone of systems work in TS.) But it’s also clear that the assault on TS started well before that, and continued to the May’21 announcement of shutting the group down completely. By that time, we had already lost half of our staff.

The End

This was the situation when in May’21, Data61 management announced that the TS group would be wound up. While it came as a shock to most staff, the above shows that the writing was on the wall, and I fully expected it. Needless to say, morale had been low for a while. Nevertheless, the awesome TS team kept delivering, albeit at (temporarily) reduced efficiency.

TS survived the betrayal, as you all know. How this happened, and how it got us into a much stronger position than we had been in a long time, will be the subject of the final blog in this series. I want to keep the way forward well separated from the depressing past, and simply stop thinking about CSIRO and the dishonesties and betrayals that are associated with it.

A few months back I fact-checked the CSIRO CEO’s evidence to a Senate Estimates Committee hearing. At the time I promised more fact-checking of CSIRO’s communication about the abandoning of Trustworthy Systems (TS). Well, it has taken much longer than I hoped, mostly because I’ve been working 60-hour weeks to undo the damage caused by CSIRO to the TS group, and seL4 and its ecosystem. Six months down the track I’m winning, but it’s taking all my time and energy.

So, somewhat delayed, here we go…

CSIRO Statements

There are at least six ways in which CSIRO communicated about the dismantling of TS:

- Statements made by management to all Data61 staff

- Statements made by management/HR to TS staff

- Statements made by management to unions

- Statements made by CSIRO to the media

- Statements made by CSIRO to industry partners

- Statements made by the CSIRO CEO to the Australian Parliament

Let’s look at them in turn.

1. What CSIRO told all Data61 staff

Exactly 6 months ago, on 17 May 2021, CSIRO’s Data61 held an all-staff meeting where management informed staff about the new Data61 strategy. While there were some extremely vague statements about the new strategy earlier in the year, this was the first time things became concrete.

Some of the statements made there by management are (and I point out that none of the following are literal quotes, as I do not have access to a recording/transcript or the slides presented, only my sparse notes):

- Data61 will be doing “fewer, bigger things”

- Data61 will be all about AI

- Data61 will continue to do cybersecurity, but only

- cybersecurity for AI, and

- AI for cybersecurity

- Data61 will exit “less aligned areas”

- There will be “minimal impact” on university collaborations (so-called CRP agreements)

We’ll refer to those statements as S1.1–S1.5 in the following.

2. What CSIRO told TS staff

On 20 May, TS staff received email from CSIRO HR stating (among others):

In relation to the Trustworthy Systems Group, the review has identified that while this group has achieved impact in the past, the capability area is not aligned with the three new strategic goals of the Data61 Strategy and fewer and bigger things identified. In particular, we will be focusing on the intersection of AI and Cyber, not just any cybersecurity and related research.

This literal quote barely qualifies as English, but let’s try to separate it into individual statements (referenced as S2.1–S2.3 below):

- the capability area is not aligned with the three new strategic goals of the Data61 Strategy

- [TS is not aligned with doing] fewer, bigger things

- [Data61] will be focusing on the intersection of AI and Cyber, not just any cybersecurity and related research

3. What CSIRO told unions

On 21 May, CSIRO management sent an Advice to Unions, stating (among others):

Data61 will pivot away from areas that lack critical mass, are not at the cutting edge scientifically, and/or do not have sufficient market demand.

Separating the sentence into individual claims S3.1–S3.3, we get:

- pivot away from areas that lack critical mass

- [pivot away from areas that are] not cutting edge scientifically

- [pivot away from areas that] do not have sufficient market demand

4. What CSIRO told the media

On 21 May, a CSIRO spokesperson was quoted as:

The Trustworthy Systems group is focused on the area of formal methods for design, implementation, and verification of software systems. It is [sic] mature area of technology that CSIRO has invested in over a number of years and is now well supported outside the organisation

https://www.innovationaus.com/data61-dumps-world-class-sel4-security-team/

We separate the claims into:

- [what TS is doing] is a mature area of technology

- [TS technology] is now well supported outside the organisation

5. What CSIRO told industry partners

An email sent to industry partners (which reached me at my UNSW address via third parties not encumbered by NDAs) said:

- Data61 is committed to deliver to our partners, such as [company], especially on current projects and any work-in-progress.

6. What CSIRO’s CEO told parliament

In my earlier blog I fact-checked the testimony Dr Marshall, CSIRO’s CEO, gave to the Australian Senate. The conclusion was that some of it was factually incorrect and relevant information was omitted (and my clarifications/corrections to Dr Mashall were not passed on the parliament). I won’t go through all of it again, but am extracting the bits that are relevant for contrasting other statements made by CSIRO:

- [TS technology is] very mature and it’s open source, so it’s difficult to see an opportunity to build an industry in Australia or to derive a national benefit from that technology.

Let’s examine these statements in turn

Statements to all staff

S1.1: “Data61 will do fewer, bigger things.”

TS was unique inside Data61 in tackling large problems with critical mass and a unique combination of skills. Our strategy explicitly said for years “we solve problems no-one else can” – and we did.

Assessment: This is exactly what TS is known for, so that’s not a reason for destroying TS, to the contrary.

S1.2 + S1.3: Data61 will be all about AI, plus cybersecurity as long as it’s for AI systems.

It’s obviously management’s prerogative to define what to include or exclude in their research strategy (if you can call a bunch of buzzwords a “strategy”). However, “cybersecurity for AI systems” would clearly include the work of TS. Here are a few proof points:

- The DARPA HACMS and CASE programs explicitly use TS technology to protect autonomous systems from cyber-attacks.

- seL4 Foundation member Ghost stated: “There is more research required into how to architect, construct and integrate an AI application on a trusted system of this complexity, and how we achieve this at scale. Investing in AI research without investing in trustworthy systems research will greatly diminish the impact and applicability of AI to real-world products.” Clearly, TS technology ensures cybersecurity of AI systems.

- Another seL4 Foundation member, Horizon Robotics, said: “To address the challenges of safety, security and realtime in autonomous software, a fundamental high quality state-of-the-art microkernel is needed. We are looking forward to working with members of seL4 Foundation to build mixed-critical platform and solution for next-generation autonomous driving vehicles.” Again, TS technology providing cybersecurity for AI systems.

There are more examples of this. Clearly, if you’re serious about cybersecurity for AI systems, then TS’s is the go-to technology, so ruling TS out of scope on that base is dishonest or clueless.

But it gets worse. Data61 seems to keep investing in blockchain technology. There’s nothing inherently wrong with that, but how does it fit the criteria that supposedly rule TS research out of scope? Blockchain may be a popular buzzword, but it’s not AI. And even calling it cybersecurity for AI is a huge stretch. It’s much less obviously aligned with the Data61 “strategy” than TS technology is. The same can be said about Data6’s IoT-Cloud Security work.

Assessment: Ruling TS technology out – while ruling blockchain and IoT-Cloud security research in – based of the Data61 strategy is inconsistent and dishonest.

S1.4: Data61 will exit “less aligned areas”

As above: TS research is clearly aligned with “cybersecurity for AI systems”, and is better aligned than blockchain research and at least as well as “IoT-Cloud Security”, yet they “exit” TS but, apparently, not the others.

Assessment: inconsistent and dishonest.

S1.5: There will be “minimal impact” on university collaborations (CRPs)

Well, the TS CRP with UNSW was one of the biggest (if not the biggest). It was terminated early as a consequence of the “exiting” of TS. Do you call this “minimal impact”?

Assessment: Looks pretty dishonest to me.

Statements to TS staff

S2.1: [TS] is not aligned with the three new strategic goals of the Data61 Strategy

As explained above, TS is actually aligned with “cybersecurity for AI”, as independently evidenced by external sources. Yet, the clearly less-aligned blockchain area seems to continue.

Assessment: dishonest.

S2.2: [TS] is not aligned with “fewer, bigger things”

Assessment: debunked above (S1.1).

S2.3: we will be focusing on the intersection of AI and Cyber, not just any cybersecurity and related research

Assessment: debunked above (S1.3).

Statement to Unions

These were a number of “and/or” conditions, so notionally the combination was true if at least one of them was true. Let’s look at them one by one.

S3.1: exiting areas lacking critical mass

TS used to be the by far biggest group in Data61, there’s no doubt there was critical mass. By the time of the decision, this had been withered down to about 14 CSIRO full-time staff by not replacing departures and not renewing fixed-term contracts (leading to projects being understaffed and failing to deliver on time – partners getting screwed as a result). In addition there were about 6 FTE UNSW research and engineering staff. 20 FTE is still a pretty good group size, so this claim does not hold for TS.

S3.2: exiting areas “not cutting edge scientifically”

There is plenty of work on-going in Data61 that is nowhere near “cutting edge scientifically”, but TS wasn’t one of them. Just to give one example, our work on time protection as a principled means for completely eliminating information leakage through timing channels is without doubt cutting-edge, I’d even claim it’s peerless. Just one indication is that out of 4 publications in the 2 years prior to the decision to abandon TS, that work has won three best-paper awards. I challenge anyone to publicly state that this work is not cutting edge – they’ll open themselves up to ridicule. So, this claim also doesn’t hold for TS.

S3.3: exiting areas that do “not have sufficient market demand”

In the context of TS, this one is directly contradicted by CSIRO’s own public statements, specifically S4.2, as well as the strong uptake of seL4 and the growth of the seL4 Foundation in the past 6 months. So, this claim doesn’t hold for TS.

Assessment: neither of these individual claims hold for TS, and hence using them (even in combination) as a justification for “pivoting away” from TS research is dishonest.

Statements to the media

The astute reader will notice that the complete quote avoids associating TS with cybersecurity in any way. That in itself is interesting, given that the importance of cybersecurity is much more widely understood than that of software verification, even though you can’t have real cybersecurity without verification. This looks very much like spin.

But let’s look at the individual statements:

S4.1: TS technology is mature and doesn’t require on-going support

I wrote a whole blog debunking this nonsense. I’m not going to repeat it here, but the summary is while seL4 is mature enough to be deployed in the real world, there’s plenty of fundamental research work left on seL4 itself (such as the award-winning time protection work), and there is far more research left on how to achieve real-world trustworthy computer systems.

Assessment: Utterly wrong.

Statements to industry partners

S5.1: Data61 is committed to deliver to our partners

So, they claimed they are “committed” to delivering to customers, just as they decided to get rid of all the people who have the skills for such delivery? Making such a statement to partners who are handing over large sums to CSIRO in exchange for getting research done which CSIRO is now obviously incapable of delivering is just stunning. And management can’t pretend they didn’t know that, the uniqueness of TS’s skillsets are widely known in the organisation. It’s a shame none of the affected partners sued them, they would have had a good case I reckon.

What happened in the end is that one partner terminated their multi-million-dollar project (after having handed over the majority of that cash). The others we transferred to UNSW, where they are served by the remaining TS team. However, that possibility only existed because UNSW offered to fund the team to the end of the year – without that offer (which was made after CSIRO’s announcement, of which UNSW was given no prior warning) there would be no TS team left. So if CSIRO were to make any claims (which were at least implied in some of the statements) that all is good because we’re continuing at UNSW that would add to the dishonesty.

Assessment: Blatantly dishonest.

Statements to the Australian parliament

I already fact-checked Dr Marshall’s evidence to the Estimates Committee of the Australian Senate, and concluded that it omits important facts, and especially with regard to a senator’s question about work on protecting autonomous cars from cyber-attacks (of which CSIRO has none after demolishing TS). I pointed out that the claim of “difficult to see an opportunity to build an industry in Australia or to derive a national benefit from that technology” cannot be reconciled with the fact that there is a growing number of Australian companies whose business is based on TS technology. (And to my knowledge, there is no research remaining on Data61 that can make a similar claim.)

A further dis-proof of this claim is the Laot project, funded by Australian Defence, which deploys TS technology for protecting Australia’s critical infrastructure. And silly me thinks that would be in the national interest? Particularly after Australian Home Affairs Secretary Mike Pezullo’s warning about attacks on critical infrastructure?

Moreover, Dr Marshall failed to correct the record after I provided the information to him, so he has no place to hide.

Assessment: Omits important facts.

Comparing CSIRO statements

Amazingly, CSIRO’s statements aren’t even consistent with each other. Specifically, CSIRO makes claims that TS technology:

- “does not have sufficient market demand” (S3.3);

- “is now well supported outside the organisation” (S4.3);

- “[no] opportunity to build an industry in Australia or to derive a national benefit” (S6.1).

I can’t see how to reconcile these statements with each other, especially “no market demand” on one side and at the same time “well-supported outside” (by industry!)

Assessment: contradictory.

Conclusions

The seemingly inescapable conclusion from the above is that CSIRO’s communications about TS are full of half-truths, untruths and contradictions. This is a pretty sorry state of affairs for our national science agency, and specifically its Data61 arm that is all about the future-critical information technology. Note that this is an organisation that lists “trusted” as one of its values. How can you trust an organisation that is so loose with the truth?

Unfortunately, this is consistent with the long and slow decline of CSIRO, resulting from budget cuts and political interference. I am actually amazed that there is still great science being done in parts of CSIRO, despite the oppressive culture (likened by some to the Soviet Union) created by management. Data61, despite all the propaganda about excellence etc, based on my observations (and that of others), is an organisation that fosters mediocrity and is hostile to excellence.

Four years ago, Data61’s then Chief Scientist Prof Bob Williamson FAA conducted an internal review of Data61 science. It identified 6 groups/teams as being top-class, TS being one of them. (Another one was the Legal Informatics team that got disbanded at the same time as TS.) Not much is left of those, the top people left or their teams got abolished. What is left is mostly mediocre.

The same review also identified areas that were sub-par. It was particularly scathing about one team:

This is a weak team that does not know it. Unfortunately they appear to have been favoured by the program director who has a very poor sense of scientific quality. The interview and documentation was disappointing. They are actually a reputational risk to the organisation. They should be disbanded. I doubt there is talent here that is worth retaining.

That team is continuing in Data61, and its leader has been given extra responsibilities. It’s all you need to know.

Data61 management is likely to counter this with the external “science review”, which contained a fair amount of uncomplimentary material, some of which was apparently aimed at TS, especially comments about living off the past. The problem is that we were supposed to talk only about impact, of which TS has plenty, but, of course, that’s inherently a rear-mirror view. Any reference to our on-going research and vision was censored from the group leader’s talk by management and comms people! Talk about setting world leaders up for failure!

My final conclusion is that Data61 is an institutionalised mediocrity and a waste of taxpayers’ money. Eventually, people will realise this and shut it down (or completely revamp it). While inevitable, this may take up to five years – plenty more tax dollars will be wasted until then. Depressing prospects for the tax payer.

But on the bright side: TS is over the hill and is regrowing its strength. We aren’t done yet with producing excellence and impact – stay tuned!

Following CSIRO’s abandoning of Trustworthy Systems (TS) and the seL4 technology TS developed, the seL4 Foundation has seen a significant increase in Foundation membership, including a number of new Premium Members that get a seat on the Board. Among others, this has increased the Foundation’s budget ten-fold – a great shot in the arm for the Foundation and its role of growing the seL4 ecosystem.

Does that mean a change of control of seL4?

This is a concern I have come across, mostly from people who do not (yet) understand how an open-source community such as seL4 works. (And I have been informed that people behind some of the competing, yet inferior, technologies out there are actually feeding this concern; classical FUD as it has been used against open source for decades. If someone presents you with this FUD then please point them here.)

In short, there’s no basis to this concern.

The way the seL4 community operates has not changed since we set up the seL4 Foundation in April 2020 (other than having overcome much growing pain). We have a clearly-defined and open governance structure which consists of the seL4 Board (responsible mainly for Foundation finances and recruitment) and the Technical Steering Committee (TSC) for technical leadership. I’ll explain these below, after addressing some specific concerns people have raised.

Could some organisation undermine seL4’s security?

In a word: No.

To start with, like in any other functioning open-source project, contributions to seL4 are made openly, via pull requests on the public Github, and anyone subscribed sees each of them. Any pull request is carefully vetted and discussed publicly. It cannot be merged until it passes regression tests (both traditional testing and proofs) and is approved by at least one committer, a trusted expert blessed by the TSC. You can’t buy committer status, you can only earn it, by earning the trust of the TSC. And only after this approval, the PR can be merged by one of the committers. So far, so standard for open source.

However, seL4 is special, as we all know. And what makes it special is its formal (mathematical), machine-checked proofs. With other projects, regression tests are just that: tests. They exercise the software on carefully chosen inputs and the outputs are compared to expectations. As all testing, this is inherently incomplete and cannot fully protect against bugs (or malicious code) being introduced.

The seL4 proofs are different: They are complete in a strong sense; code that is proved correct against a specification cannot have bugs as far as the specification is concerned. And by re-running proofs before merging changes, we can be certain that the code is still bug-free. That’s the really unique thing about seL4: even if the reviewers and committers fail to spot a bug that’s been introduced, the proofs will catch it (by failing).

The proofs do not yet cover all aspects of seL4, for example the 64-bit Arm version is not yet verified, multicore operation is not yet verified, nor is the boot code, cache management, and the very small amount of assembler code. Hence there is still potential for bugs in those unverified bits (and occasionally we find some). But the unverified configurations share much code with verified ones, and overall the kernel is small, so that the risk is far reduced compared to almost all other software. Deliberately introducing a vulnerability even into those parts would be extremely hard. And it would be detected as soon as that code gets verified. But, as we always point out, when running an unverified configuration you have no guarantee. A good reason to work together to raise funds for verifying those missing parts!

Also, to repeat, the contribution process has not changed since we set up the Foundation. Members cannot buy committer status, and they certainly cannot buy changes to the code.

How about Board membership?

According to the seL4 Foundation Fund Charter, the seL4 Board manages the seL4 Foundation Fund, and the purpose of the seL4 Foundation Fund is “to raise, budget and spend funds in support of the seL4 technical project carried out by [the seL4 Foundation]”. In other words, the Board’s role is to raise money and decide how to spend it for the benefit of seL4. It has no say on technical decisions (other than by prioritising specific activities through directing money there).

The Charter also stipulates that the Board “will strive to make decisions based on consensus.” This is real: We are yet to experience a case where a Board decision was not unanimous (other than members abstaining for conflict-of-interest reasons). In fact, our experience is that Board members very much trust the seL4 Founders (June, Gerwin and myself, who have guaranteed Board seats for five years renewable). A diverse Board representing a breadth of stakeholders yet unified in trust is a great asset for the seL4 community!

What if that trust broke down?

A single rogue Board member cannot do much damage (other than making the Board’s life harder), and the fixed representation of the founders acts as a further stabiliser. If a significant number of Board members tried to pull into a different direction, then that would be a problem, but would be a reflection of serious issues in the seL4 community as a whole. I do not have any concerns in this respect. Our Board members, whether elected by members or representing Premium Members share a common interest: they are there because they are vested into the technology; their companies are developing their products around seL4. Their interest is to see seL4 flourish, not undermined.

How about the TSC?

The role of the Technical Steering Committee is defined by the seL4 Foundation Technical Charter. Its role is defining the rules governing development of seL4 and the core open-source technologies around it, enable and foster technical collaboration and provide technical oversight.

Who is on the TSC?

Its members are the original committers, plus further trusted contributors the TSC decides to invite. So it’s really the trusted technical leadership – you can’t buy your way in there. (Note that this is different from, e.g., RISC-V International; Premium Members get representation on the RISC-V TSC.)

The seL4 TSC also blesses new reviewers and committers, ensuring that only people who have earned the trust of the community can authorise changes to the mainline code (but never on their own).

Will CSIRO’s abandonment slow seL4 development?

This is an obvious concern, and there was a very serious risk that this could happen.

Financially, the impact is minimal. In CSIRO we had some base funding that helped us maintain the technology. While this has disappeared, it was quickly picked up by the community. Community contributions have increased massively since we moved out of CSIRO, and the Foundation membership has grown (increasing its budget ten-fold). Those two developments together are easily making up for the loss. And all major developments have been externally funded ever since TS became part of CSIRO. CSIRO’s commitment to seL4 was never more than superficial.

The biggest risk right after CSIRO’s move was to lose most of TS’s people. Mountains of money are no use if you don’t have the skilled people to spend it on. And that risk was very real: After word of CSIRO’s actions got out, many people had job offers within days. Had not UNSW, within a day(!), stepped up to support the team for half a year, the result might well have been catastrophic for seL4.

Thanks to UNSW, that has been avoided. We still lost many people, but most stayed in the community and keep contributing as part of their new job. This is healthy, as it broadens the community and helps it mature to the point where it is no longer dependent on a single organisation. And UNSW gave us the buffer we needed to line up new funding to continue the TS team and grow it back. We’re in the middle of this, and it’s going well – you’ll hear some announcements soon. For now I can just say how extremely grateful we all are to Aaron Quigley, Head of School of Computer Science & Engineering at UNSW, for moving quickly and decisively to protect TS and seL4.

How will we fund major projects?

There are a number of major projects we’re looking to fund, mostly the “big ticket” verification projects (AArch64 and multicore), some medium-sized verification projects (completing the RISC-V verification story and completing verification of the MCS kernel), as well as a number of systems projects (such as multicore VMM, device virtualisation, an actual seL4-based OS). We need external funding for those, and that would not have been different with CSIRO.

But we’re in a much stronger position now. The community has realised that these things won’t happen on their own, they need to contribute. And the community, and especially the Foundation, has grown, which helps. And we have a much stronger (and growing) ecosystem of companies providing services around seL4, which itself strengthens the ecosystem and will lead to further investment. One of these companies is, of course, Proofcraft, the provider of verification services founded by the leaders of the seL4 verification.

The future

Personally I’m much more bullish about seL4’s future than I was a year ago (and those who heard me talk about seL4 then will understand that this means something!) CSIRO was, in many ways, a weight that slowed us down. When I bring money into UNSW, I remain in control of the funds, and can hire as needed. At CSIRO, in contrast, we were always at the mercy of management, much of which are people who have never achieved anything of scientific significance in their life, but think they know better than those who have – definitely a poor fit.

Since the divorce, things have been moving faster in the right direction than I anticipated, and by its anniversary we will be in a far stronger position than before. You’ll see some indications of that in the near future.

Stay tuned, stay calm, and trust your kernel (and the team behind it!)

On 3 June 2021, Dr Larry Marshall, CEO of CSIRO, gave evidence in an Estimates hearing in the Australian Senate. The official transcript is available from the Australian Parliament’s web site. Senator Chisholm asked a number of questions regarding CSIRO’s decision to abandon the Trustworthy Systems Group (TS), one of them Dr Marshall took on notice. The answer was published last week (Question 71), so I thought I might do a little bit of fact checking.

Dr Marshall’s oral evidence

Referring to an article in InnovationAus, Sen Chisholm asked about Data61’s job cuts in general, and then starts homing in on TS:

Why has CSIRO announced it will discontinue funding for the world-leading Trustworthy Systems team?

To which Dr Marshall replied:

I thought that was what you were referring to earlier. This is the team that developed the seL4 kernel, which is a microkernel that’s very good for security of mobile phones. That breakthrough was made back in the early 2000s by NICTA and UNSW. The team that you’re referring to is actually on campus at UNSW in Kensington. Unfortunately, that technology was licensed to I think Qualcomm—don’t hold me to that—for a one-time fee. I say ‘unfortunately’ because that technology has gone through two billion mobile devices. Unfortunately, there was no ongoing royalty arrangement with the deal that was done at that time. NICTA did spin out a company to try to commercialise that technology—OK Labs—but it closed its doors in 2012. The NICTA team continued to develop the seL4 kernel from about 2009. When we acquired NICTA we continued to work with them.

Not everything here is correct. In particular, seL4 has no more to do with mobile phones than with autonomous aircraft, autonomous cars, medical devices, critical infrastructure protection, defence systems – it’s used in all of these. Dr Marshall confuses seL4 with the earlier L4 kernel that became OKL4, which we last touched in research in 2006, and which ended up on billions of Qualcomm chips and iOS devices.

And the comment about a breakthrough in the early 2000s? This sounds to me like he’s trying to say “these guys did some great work 20 years ago but not much since.” Such an implication would be blatantly wrong. Our first breakthrough was the verification of seL4 in 2009, the first proof of implementation correctness of an operating system, something people had been trying for 30 years. But that was not the end of it: We followed it up with further world-firsts: proving that seL4 enforces integrity and availability, later proving that it enforces confidentiality, extending the correctness proofs to the binary code (no longer having to trust the compiler), and the first sound and complete worst-case execution-time analysis of a protected-mode operating system. And our latest flagship project, time protection, presents a principled, systematic approach for preventing information leakage through timing channels – a serious real-world problem almost everyone puts into the too-hard basket or tries to combat with patches and workarounds. While only at the beginning, this project has already won three Best Paper awards. I’m yet to see any project in Data61 that is comparable in ambition and potential real-world impact, yet credible given the track record of the people behind it. So any implication that we’re resting on our laurels is just plain BS.

But maybe I’m just paranoid and he didn’t mean to say what can be read into his statement. Besides, we can’t reasonably expect Dr Marshall to be on top of all the detail of what’s going on in Data61, so I’m giving him the benefit of the doubt.

Dr Marshall continues:

There is a challenge with that technology. It’s very mature and it’s open source, so it’s difficult to see an opportunity to build an industry in Australia or to derive a national benefit from that technology. Given our priority is artificial intelligence, we chose to pursue that and focus our resources where we thought we could derive greater national benefit.

Very mature? Well, it’s mature enough to be deployed in real-world systems, but that does not mean there is no research left – to the contrary, as I had pointed out in a recent blog. It’s as if solid-state electronics was considered “mature” and no longer in need of research once the first transistor radio shipped. We wouldn’t have laptops or mobile phones with that attitude.

The argument that because it’s open source “it’s difficult to see an opportunity to build an industry in Australia or to derive a national benefit from that technology” is outright bogus. We’re building up an ecosystem around seL4 that has already delivered a lot of economic impact, including multiple new companies, development for Australian defence, and projects using seL4 to protect Australia’s critical infrastructure. In fact, CSIRO’s abandoning of seL4 is particularly stunning given that less than two weeks earlier, Home Affairs Department secretary Mike Pezzullo was quoted in the media with truly alarming statements about threats to Australia’s critical infrastructure. seL4 is the best foundation for providing strong protections here – how can investing in it not further the national benefit? I find that statement truly mind-boggling. Can CSIRO management really be that oblivious to the power of its own technology?

Sen Chisholm:

Are there any outstanding contracts requiring the ongoing services of that team?

Dr Marshall:

That team has a number of contracts, which is good, because it made it easier for UNSW to work with them based on that external revenue. It would be great if they continued to do that and even better if they were able to figure out how to create a company around that. That would be a great outcome. Our conclusion was that that’s not really feasible in Australia, which is why we chose to discontinue the work.

Now this is where we’re getting into dangerous territory as far as the truth is concerned. For one, Data61 told contract partners:

Data61 is committed to deliver to our partners, such as [company], especially on current projects and any work-in-progress. [1]

Note, this was before UNSW stepped in to rescue the team! Without UNSW’s action, the team would be gone by now, and the delivery on the contracts simply impossible, as CSIRO has no-one else familiar enough with the technology. Which raises an interesting legal question: How can a team be declared redundant, when it is needed to deliver on commitments to funding partners? But I digress.

It is worth noting that Dr Marshall offers two reasons why Trustworthy Systems and the seL4 technology was abandoned: (1) it’s not AI (true, but see below…) and (2) you can’t turn it into a startup. The second one is rather curious: if the ability to become a startup is a necessary condition for research in Data61, then they’ll have shut down most of the joint. While TS does not have to be shy about its economic impact, there’s not a lot in Data61 that can match it. But then, measuring economic impact isn’t easy and I’m not an economist. I’ll leave this point for another day.

However, Sen Chisholm homes in on reason (1):

Okay. AI has been identified as a sector that is going to be really important in autonomous cars and so forth. What funding is being directed to ensuring that we have a system that’s safe from hackers and is robust, I suppose, in its use?

CSIRO takes this question on notice.

CSIRO’s written answer

CSIRO’s response has just been published. I quote it in full:

Artificial intelligence (AI) and cybersecurity are growing areas of investment and collaboration for CSIRO, with a specific focus on the use of AI to protect against cyber-attacks as well as mitigating against new forms of AI-enabled cyber-attacks.

Work to ensure security of systems is an integral part of larger CSIRO projects. Thus, it is not possible to provide accurate funding figures for this specific area. CSIRO projects of relevance include:

1) cutting edge machine learning and AI technologies to generate realistic computer systems and assets for the purposes of deceiving intruders

2) advanced AI-driven anti-phishing techniques to defend systems from social engineering or advanced AI-driven phishing attacks

3) technologies for detecting vulnerabilities in AI systems and software applications, and corresponding countermeasures

4) advanced technologies to make security solutions usable/human-centric, combining the benefits of AI and human intelligence

5) advanced AI-driven solutions for preserving data privacy

6) advanced methods to detect fake news and protect social media users using AI

7) advanced techniques to increase the trustworthiness of AI systems

8) AI and cybersecurity projects in partnership with Defence Science and Technology under the Next Generation Technologies Fund to realise new capabilities to support Australia’s Defence.

[Note that CSIRO’s response has a list of bullet-point items here, I numbered them for easier reference.]

The astute reader will notice that this does not actually answer Sen Chisholm’s question at all when he talks about protecting autonomous cars! At best items (3) and (7) are loosely related, the others are utterly irrelevant to the question.

How does this relate to seL4?

What Dr Marshall did not say is that seL4 is not only obviously useful for protecting AI systems, but is actually being deployed in many places for exactly that purpose. I give a number of examples.

DARPA: In the DARPA HACMS program we worked with project partners on protecting a military autonomous helicopter from cyber attacks – successfully. We are presently participating in DARPA’s successor program CASE, which is about scaling up the technology and is still centered around autonomous aircraft. And just for the record (so Dr Marshall cannot claim that this is about aircraft, while Sen Chisholm asked about cars): In HACMS, seL4 also secured autonomous army trucks – successfully.

Autonomous passenger cars: But it’s not just the military, the automotive industry is also recognising that seL4 is the best technology for protecting autonomous vehicles from cyber attacks.

- In my previous blog I quoted Dr Daniel Potts of of autonomous driving company Ghost Locomotion. Ghost is a member of the seL4 Foundation, because they think that the seL4 technology is required for keeping the AI system secure. “Investing in AI research without investing [in] trustworthy systems research will greatly diminish the impact and applicability of AI to real-world products“, he said.

- seL4 Foundation member Li Auto, makers of premium electric cars, say: “Li Auto’s team will develop a secure highly extensible real-time open platform for next generation self-driving vehicles based on the micro-kernel OS seL4. The platform will enable an ecosystem for third party application developers.“

- seL4 Foundation member NIO, also a premium electric-car maker, says: “The Digital Systems department at NIO is missioned to develop the most advanced software platform for the next-generation autonomous driving vehicles in the industry from the ground up. This platform is internally named NIO Vehicle Operating System (NVOS) and based off seL4.“

- And Horizon Robotics, also a member of the seL4 Foundation, calls themselves a “a global leader in the development of artificial intelligence computing platforms.” And they go on to state: “To address the challenges of safety, security and realtime in autonomous software, a fundamental high quality state-of-the-art microkernel is needed. We are looking forward to working with members of seL4 Foundation to build mixed-critical platform and solution for next-generation autonomous driving vehicles.“

The last three quotes can all be found on the seL4 news page.

So, when it’s about protecting AI systems from cyber-attacks, industry goes for seL4. The very technology CSIRO thinks is not relevant to their agenda.

An honest mistake?

Maybe Dr Marshall didn’t know that when he gave his evidence to the Senate?

Quite possible he didn’t know at the time he appeared before the Senate, he cannot be expected to know everything that’s going on in CSIRO. But he definitely knew by the time he signed off on CSIRO’s answer to the question on notice.

How do I know?

I told him. In an email to Dr Marshall I said:

I can help you with [the answer to Sen Chisholm’s question]: this funding has just been dramatically slashed. seL4 and the rest of the Trustworthy Systems (TS) technology is proven to protect autonomous vehicles from cyber attacks, ever since the DARPA HACMS program demonstrated four years it could thwart such attacks against an autonomous helicopter and autonomous army trucks (see https://ts.data61.csiro.au/projects/TS/SMACCM.html). Since then, TS technology is being designed into military as well as civilian autonomous vehicles. (Besides a lot of other uses, such as secure communication devices, including those built by Canberra-based Penten and in daily use in the Australian Army.)

[Note that the link in the quote may no longer work by the time you click on it, due to our web site migrating to UNSW. The new link is https://trustworthy.systems/projects/TS/SMACCM.]

Dr Marshall acknowledged that email. So he cannot pretend he didn’t know.

In the same email I also challenged his comments on startups:

I also found your claim that TS couldn’t be turned into a startup to be quite misleading, given that it has already generated multiple startups, the latest only a few weeks ago.

I’m looking forward to reading your explanation/clarification in the Hansard, and am happy to provide more detail as needed.

He obviously passed on the opportunity to set the facts straight.

Summarising

There’s clearly more to the story than Dr Marshall and CSIRO said in their answers to Sen Chisholm.

Does that constitute misleading parliament? I don’t know, I’m not a lawyer. But I wonder what Sen Chisholm thinks.

And I never thought I had to fact-check our national science agency. Not fact-checking the science, “just” the management. Still, truly saddening.

Stay tuned for more fact-checking of CSIRO’s communication about the abandoning of Trustworthy Systems.

[1] Just so no-one can claim I am revealing sensitive CSIRO internals: This information about CSIRO promises made to project partners came to me from third parties to my UNSW address, unencumbered by non-disclosure agreements.